kafka 和 Flume 集成简单案例

本文共 879 字,大约阅读时间需要 2 分钟。

架构 思路:

步骤1: 配置 flume

创建配置文件:flume-kafka.conf 内容如下:

# define

a1.sources = r1 a1.sinks = k1 a1.channels = c1# source

a1.sources.r1.type = exec a1.sources.r1.command = tail -F /opt/module/datas/flume.log a1.sources.r1.shell = /bin/bash -c# sink

a1.sinks.k1.type = org.apache.flume.sink.kafka.KafkaSink#指定kafka集群地址以及topic主题

a1.sinks.k1.kafka.bootstrap.servers = hadoop201:9092,hadoop201:9092,hadoop203:9092 a1.sinks.k1.kafka.topic = first a1.sinks.k1.kafka.flumeBatchSize = 20 a1.sinks.k1.kafka.producer.acks = 1 a1.sinks.k1.kafka.producer.linger.ms = 1# channel

a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100# bind

a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1开启 idea 消费者

启动 Flume

flume-ng agent -c conf -f job/flume-kafka.conf -n a1

向/opt/module/datas/flume.log 写入数据

写入数据:

kafka和flume集成在了一起了呵呵哈哈哈嗯嗯

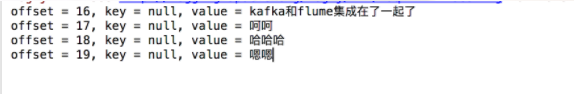

kafka 消费消费到的数据:

转载地址:http://ynuwi.baihongyu.com/

你可能感兴趣的文章

fail2ban的使用-控制连接数

查看>>

btkill-连接数控制

查看>>

NAT+www的发布

查看>>

dhcp.conf

查看>>

关于win10的升级

查看>>

cacti突然不显示流量

查看>>

发现一个好工具记录一下,U盘启动ISO文件。

查看>>

centos7下配置网卡以及查询网卡UUID

查看>>

适用于旧计算机的10款最佳轻量级Linux发行版

查看>>

在VMware Workstation中批量创建上千台虚拟机

查看>>

linux常用软件收集

查看>>

linux查看桌面环境

查看>>

centos8安装ntfs-3g后,不能自动挂载U盘(NTFS格式)

查看>>

Linux安装显卡驱动

查看>>

使用minicom

查看>>

linux常用外设-打印机指纹和蓝牙的安装管理

查看>>

记录一下安装在移动硬盘上的fedora linux v33在各种笔记本下的兼容性

查看>>

关于安装系统后不能启动的问题!

查看>>

U盘的挂载过程-先记录一下

查看>>

python程序启动过程报错的排错一般步骤

查看>>